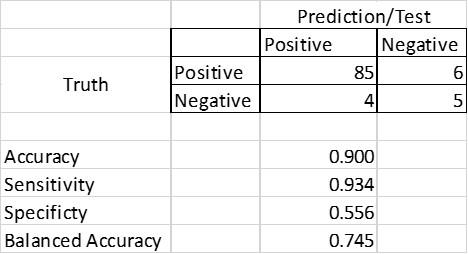

Most people know what accuracy means. Find out how many guesses you made and how many of those guesses you got correct. In the case of a diagnostic test, this is simply the number of correct answers (true positives plus true negatives) divided by the total number of answers (true positives + false positives + true negatives + false negatives). For brevity, lets denote the 4 quantities in the denominator TP, FP, TN and FN.

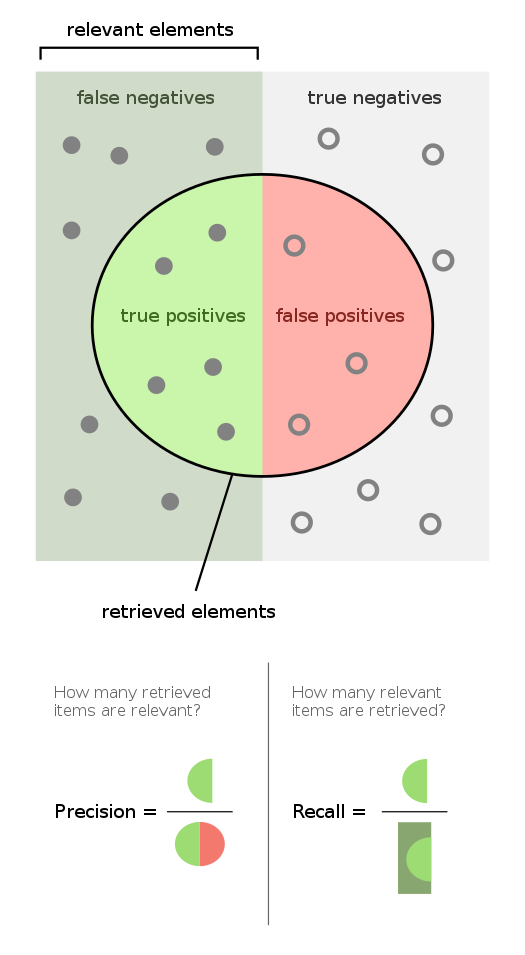

However, there are other metrics one can use. Recall–also known as sensitivity–examines the share of positive cases that your test will pick up. This is calculated as TP/ (TP + FN). Another useful metric is precision–also known as positive predictive value (PPV) which estimates how many of the positives your test found are actually positive. This is calculated as TP/(TP+FP).

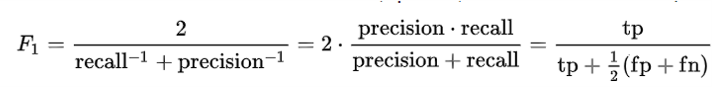

Another way to measure test accuracy is with the F-score or F-measure. For instance, the F1 score is the harmonic mean of the precision and recall, and it ranges from 1.0 (perfect accuracy) to 0.0 (completely inaccurate). The F-score is often used in measuring the accuracy of machine learning algorithms and natural language processing predictions. It is calculated as:

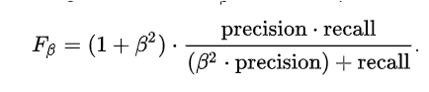

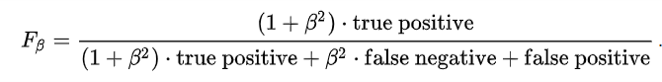

A more general form of the F-score is to use the Fβ, which uses a positive factor β, such that recall (i.e., sensitivity) is considered β times as important as precision (i.e., PPV). Fβ can be calculated as follows:

Another accuracy measure is known as balanced accuracy. Balanced accuracy is simply a weighted average of the sensitivity and specificity which is: [(TP)/(TP+FN) + (TN)/(TN+FP)]/2.

Consider the example below where a the test has very good sensitivity (0.934) but fairly poor specificity (0.556). In this example, however, most people test positive, and thus the overall accuracy is fairly good (0.900). However, the balanced accuracy takes into account the accuracy in a population that would be more evenly weighted between positive and negative cases and finds that the balanced accuracy is only 0.745.