You are the CEO of a health plan with millions of beneficiaries. If you have a demographic and health status information on these beneficiaries, can you predict how much each beneficiary will cost you? If so, how accurate will your prediction be?

Most likely, you will use a risk adjustment model to predict how much each beneficiary is expected to cost during the upcoming year.

Today I will start a brief series on risk adjustment models. Today, I examine various statistics for measuring how well a risk adjustment model can predict beneficiary health care cost. More details after the jump.

Today I review five measures of the goodness-of-fit of a model. These include:

- R-squared

- Cumming’s Prediction Measure (CPM)

- Mean Absolute Prediction Error (MAPE)

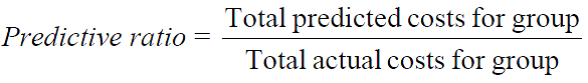

- Predictive Ratios

- Grouped R-squared

Examples of how to calculate each of these are included in this spreadsheet. The following content was largely taken from a paper by Ash et al. (2005).

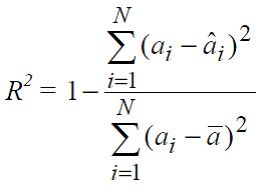

R2 indicates the proportion of the variation in the variable of interest that is accounted for by the prediction model; so R2 is a fraction ranging from 0 to 1, which represents between 0 and 100 percent of explained variation. If R2 = 1 then all the variation in the cost per person can be explained by the model. When used for comparison, the model with the R2 closest to 1 yields predictions whose averages per individual are closest to actual outcomes. One weakness of R2 is that it gives more weight to large errors, and therefore is more likely to be influenced by persons with large claims.

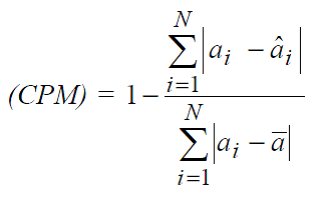

Cumming’s Prediction Measure (CPM) is advantageous in that it is a standardized measure similar to R2, but also gives equal weight to small and large errors. Note that CPM is similar in definition to R2, with values ranging from 0 to 1—this gives the proportion of the sum of absolute deviations from mean in individual costs that is explained by the risk model.

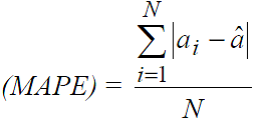

MAPE is similar to the CPM in that it is in absolute terms rather than squared terms. Thus, persons with large claims do not influence it because equal weight is given to small and large errors. However, one disadvantage of this measure is that the final estimate is not a standardized measure; making comparisons across studies harder to interpret.

Measures of predictive accuracy need not be applied at the group level. For instance, one can examine the predictive ratio for specific subpopulations to determine if the model systematically over or understates costs for specific groups of beneficiaries. Predictive ratios less than 1 indicate that the model predicts lower costs than actual on average; predictive ratios greater than 1 indicate that the model predicts higher health care costs for the group than observed.

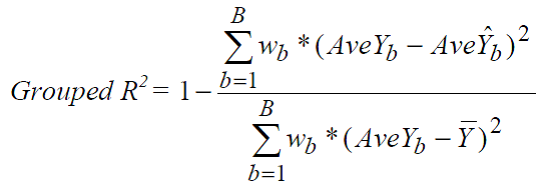

One can also used a Grouped R2. The Grouped R2 is analogous to the standard R2, where each partition is treated as a distinct unit upon which an R2 value is estimated. These R2 divisiosn are weighted by importance where importance is determined by the researched.

Source:

- Arlene S. Ash, Nancy McCall, Jenn Fonda, Amresh Hanchate, Jeanne Speckman. Risk Assessment of Military Populations to Predict Health Care Cost and Utilization. RTI Project Number 08490.006, November 2005.

smoothed